Are We Thinking About AI Music the Wrong Way?

For the longest time, music creation was gated behind access.

Access to studios.

Access to producers.

Access to session musicians.

Access to expensive software and hardware.

Access to people who could turn rough ideas into something listenable.

That reality shaped who got to participate.

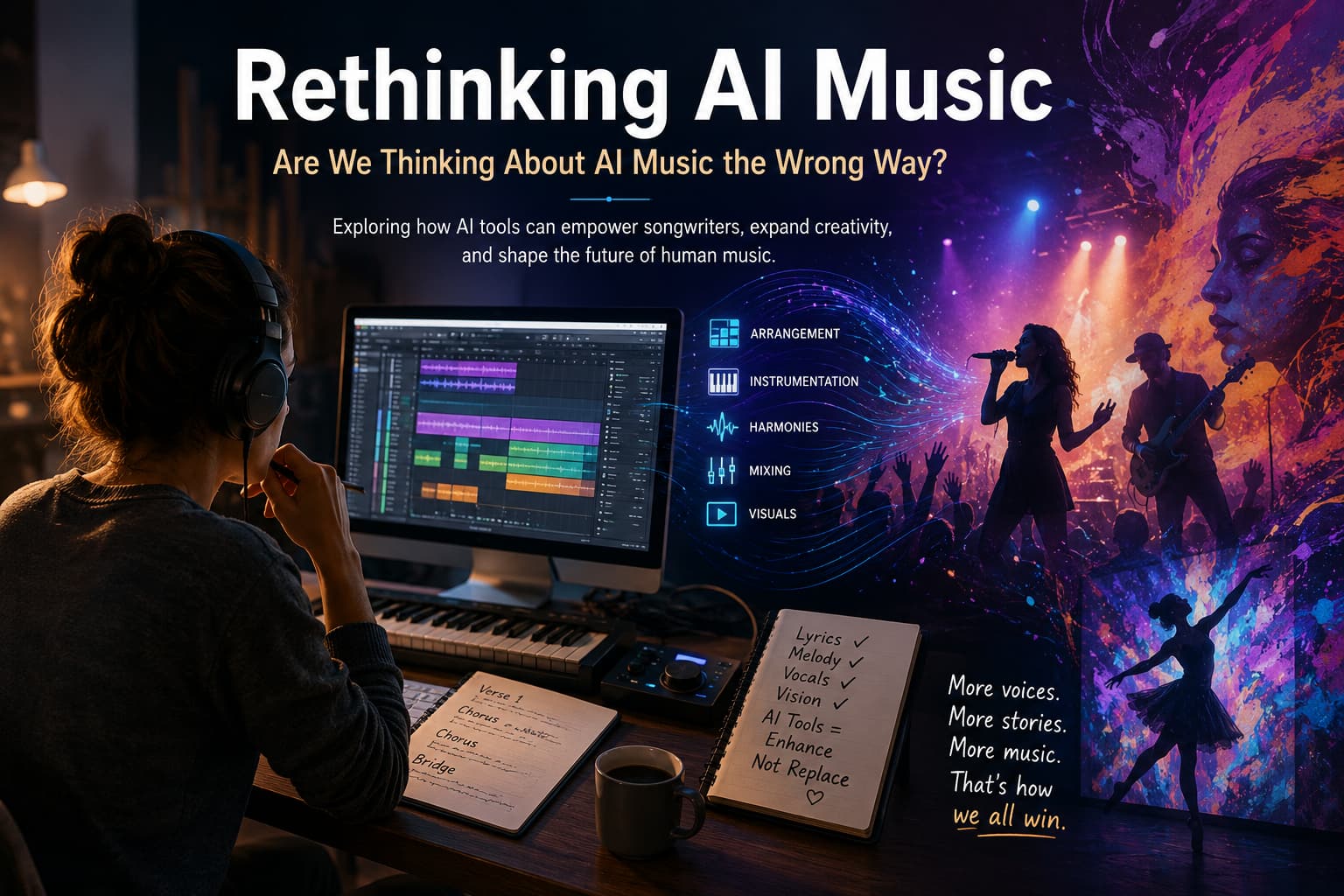

Today, AI music tools are forcing a difficult conversation. But honestly, a lot of the discussion online feels incomplete. Everything gets lumped into one giant category called “AI music,” even though there are massive differences between fully generated songs and human-written songs that simply use AI-assisted workflows.

Those are not the same thing.

The Missing Distinction

Imagine a workflow where the songwriter still:

- writes the lyrics,

- creates the melody,

- records the vocals,

- shapes the emotional direction,

- and guides the final artistic vision.

The AI is not replacing authorship. It is helping with arrangement, instrumentation, demo production, and iteration.

That starts looking a lot less like “press button, receive song” and a lot more like an evolved songwriting workflow.

Technology has always participated in music creation. The difference now is speed.

In many ways, songwriting has always involved layers of assistance:

- producers shaping records,

- session musicians interpreting ideas,

- engineers transforming raw recordings,

- MIDI mockups,

- scratch tracks,

- digital editing,

- vocal processing.

Creative tools have always evolved.

Lower Barriers, More Voices

One of the most overlooked aspects of AI-assisted music is accessibility.

A songwriter with limited resources can suddenly:

- hear ideas take shape faster,

- experiment with genres,

- prototype arrangements,

- test harmonies,

- and refine demos without thousands of dollars upfront.

That matters.

There are probably incredible songwriters out there who never had the means to fully develop their ideas before.

And when more people can participate creatively, music itself changes.

Different cultures.

Different experiences.

Different emotional perspectives.

Different spiritual ideas.

Different ways of hearing rhythm and melody.

That diversity has always pushed music forward.

Entire genres were born when technology lowered barriers and new creators finally gained access.

What If AI Music Creates More Human Collaboration?

Ironically, AI-assisted workflows may create more opportunities for human creativity rather than fewer.

Because more songs are being developed, which means more songs eventually need:

- mixing,

- mastering,

- live instrumentation,

- vocal coaching,

- video production,

- choreography,

- visual branding,

- animation,

- performance direction,

- and audience engagement.

People often assume automation only removes jobs. Historically, creative technology tends to redistribute effort instead.

When one barrier lowers, artists invest energy elsewhere.

We already saw this happen with home studios and digital audio workstations. Easier recording did not kill music creation. It exploded it.

The Rise of Performer-Centered Creativity

One fascinating possibility is the emergence of performer-centered workflows.

Not every artist is primarily a producer or composer.

Some artists are powerful because of:

- interpretation,

- phrasing,

- tone,

- vulnerability,

- stage presence,

- emotional delivery,

- and performance identity.

AI-assisted systems and vocal personas could open entirely new creative paths for singers and performers who previously lacked access to production infrastructure.

A performer might:

- develop recognizable vocal identities,

- collaborate rapidly with songwriters,

- experiment with multiple genres,

- build immersive live experiences,

- or combine music with visual storytelling and dance.

That is still deeply human artistry.

Music Is Becoming Multimedia

Music today rarely exists in isolation.

Songs increasingly connect with:

- short-form video,

- visual storytelling,

- projection art,

- live painting,

- animation,

- immersive performance,

- interactive experiences,

- and digital communities.

If artists can prototype songs faster, they may spend more time building worlds around those songs.

Some performers may create live visual art during performances. Others may integrate dance, cinematic storytelling, or real-time audience interaction into the musical experience itself.

That does not reduce creativity.

It expands where creativity can happen.

The Legal and Cultural Shift

Another possibility people rarely discuss is that AI music companies themselves may eventually move toward more human-centered workflows.

The more users contribute:

- original lyrics,

- melodies,

- vocals,

- arrangements,

- and creative direction,

the more these platforms begin looking less like autonomous music generators and more like creative production environments.

That distinction could matter culturally and legally.

Instead of:

“The AI made the song.”

the conversation becomes:

“The songwriter used AI-assisted production tools.”

Those are very different ideas.

A Good Song Is Still Hard to Write

Despite all the anxiety around AI music, one thing remains true:

A powerful tool in the hands of someone with nothing meaningful to say still produces forgettable work.

Lower barriers do not eliminate the need for:

- taste,

- emotional honesty,

- songwriting ability,

- memorable melodies,

- lyrical depth,

- or artistic identity.

If anything, those qualities become even more important when everyone has access to similar tools.

The internet already contains endless amounts of technically competent but emotionally empty content. That problem existed long before AI.

At the end of the day, listeners still gravitate toward songs that make them feel something.

And that has always been human.

Final Thought

Maybe the future of music is not “human versus AI.”

Maybe it becomes a spectrum of workflows where the real distinction is not whether tools were involved, but whether genuine human creativity, intention, and expression remain at the center.

That feels like a much more useful conversation to have.